Meta replaced "ranking engineers" with AI

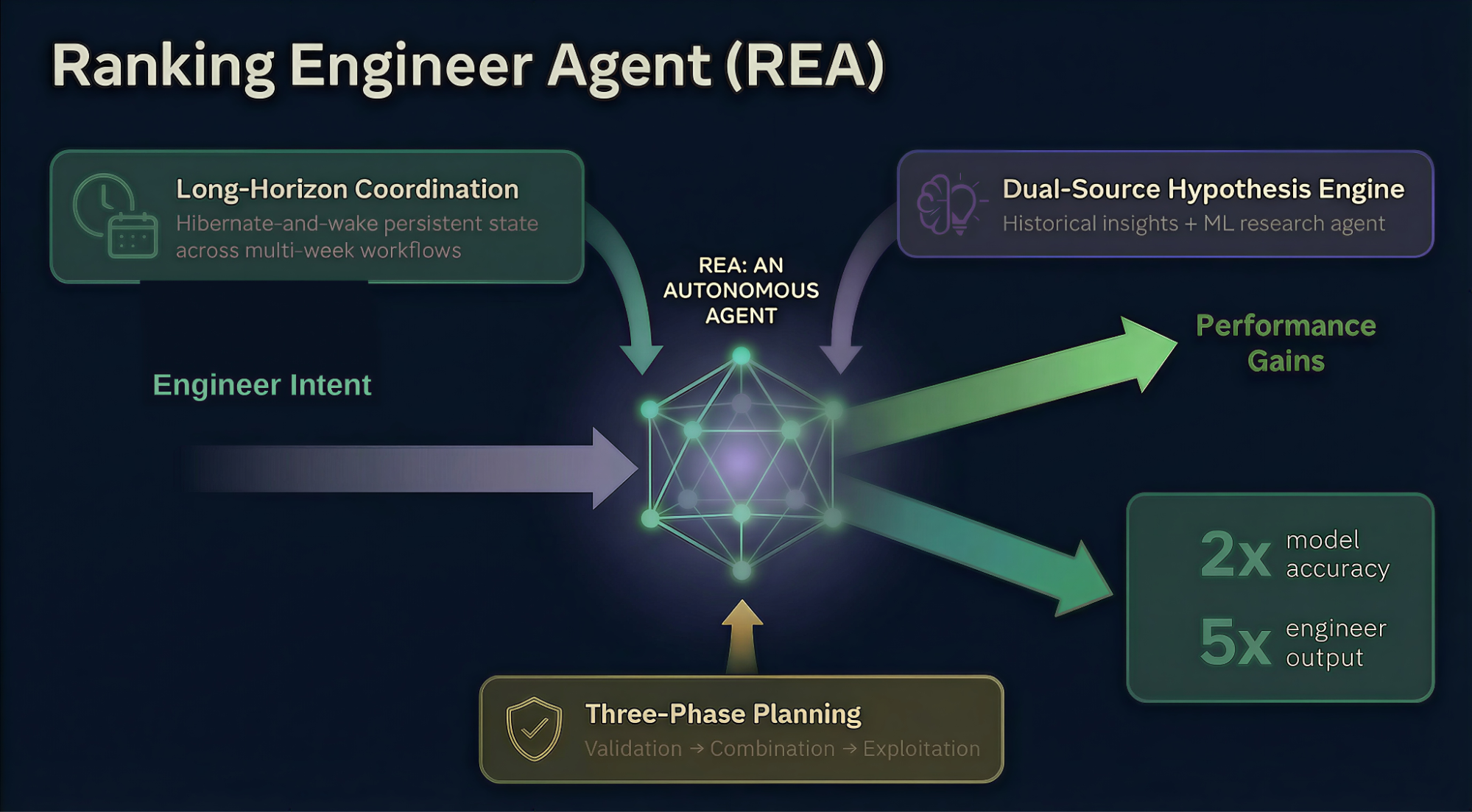

Published on the Meta Engineering Blog on March 17, 2026: REA (Ranking Engineer Agent). As the name says, the role of "an engineer who improves the ranking algorithm" is now being handled by an autonomous AI agent.

The numbers that matter to advertisers:

- 2x model accuracy improvement — REA's automated iterations average 2x vs. the prior baseline

- 5x engineer output — 3 engineers shipped 8 model improvements. Previously that needed 2 engineers per model

Source: Meta Engineering — Ranking Engineer Agent (REA)

The bottleneck in traditional ML experimentation

The thousands of ranking models powering Meta's ad system have to be improved continuously. The traditional process:

- Engineer designs a hypothesis

- Designs the experiment, writes config files

- Kicks off the training job

- Debugs failures days later, reruns

- Analyzes results, forms the next hypothesis

One cycle takes days to weeks. As models mature, the improvement headroom shrinks and engineers get pinned to the loop.

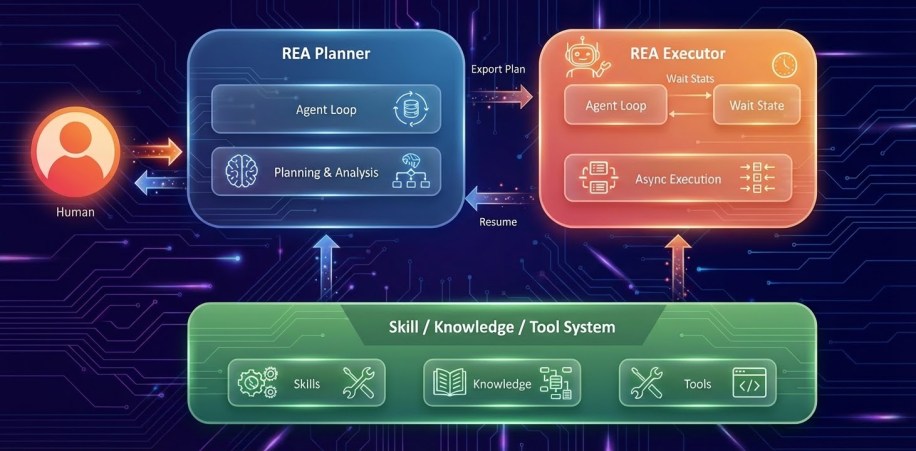

What REA automates

REA runs steps 1-5 autonomously. Key capabilities:

- Auto-hypothesis generation — proposes the next hypothesis based on existing model performance, data, and recent experiment results

- Training job execution and monitoring — manages multi-day jobs via a hibernate-and-wake mechanism

- Failure debugging — auto-navigates a complex codebase to find root causes

- Result analysis and iteration — auto-sets up the next experiment when one finishes

Humans only step in at strategic decision points. "Direction approval" level — REA handles the execution.

How this differs from existing AI tools

Tools like ChatGPT and Copilot are assistants: one-shot help like "interpret this log" or "tighten this hypothesis." The engineer still strings the steps together.

REA is an autonomous agent: runs from start to finish on its own. Even when a single experiment takes days, it stays attached and manages it.

What changes for advertisers

The ranking algorithm improvement cycle gets shorter.

- Get used to monthly-level drift — big updates used to land quarterly. In the REA era, subtle tuning lands every 2-4 weeks. Expect CPA spikes at the start of the month and stabilization by month-end, increasingly

- Need a routine to detect algorithm changes — the answer to "why did CPA change this week?" is more likely to be "it's not our account, it's the algorithm". Stop adjusting budget and targeting weekly; judge on 2-week average CPA

- Advantage+ and GEM benefits compound faster — REA keeps improving downstream models → GEM updates reflect faster → Advantage+ users feel it first

So what about us?

Don't:

- Adjust budget on daily CPA swings — touching anything during algorithm re-rolling wastes learning cycles

- Instant diagnosis of "it worked fine yesterday, what happened?" — give it 24-48 hours

Do:

- Habituate weekly reports. Daily variance stays out of the report; use weekly averages

- Gradually expand Advantage+ share — REA benefits land first in the automated surfaces

- Accelerate creative supply — faster-evolving algorithms also mean "faster fatigue." 1-2 new creatives per week as baseline

A spoonful of skepticism

The numbers REA published ("2x accuracy," "5x output") are Meta-internal experiment metrics. What advertisers actually feel will be much smaller. 2x model accuracy doesn't translate directly to 2x CPA drops.

Still, the direction is clear. "Ranking engineer velocity x 5" = "Algorithm change velocity x 5." Teams that internalize an operating style adapted to that speed will be ahead.

What's next

Meta said "this post only covered the ML experimentation piece. Other REA capabilities will be covered in follow-up posts." Deployment, A/B testing, emergency response — more areas are being automated in sequence. We'll cover the updates when they land.

The structural frame for performance analysis, A/B testing, and scaling is covered in Meta Ads Book 4.